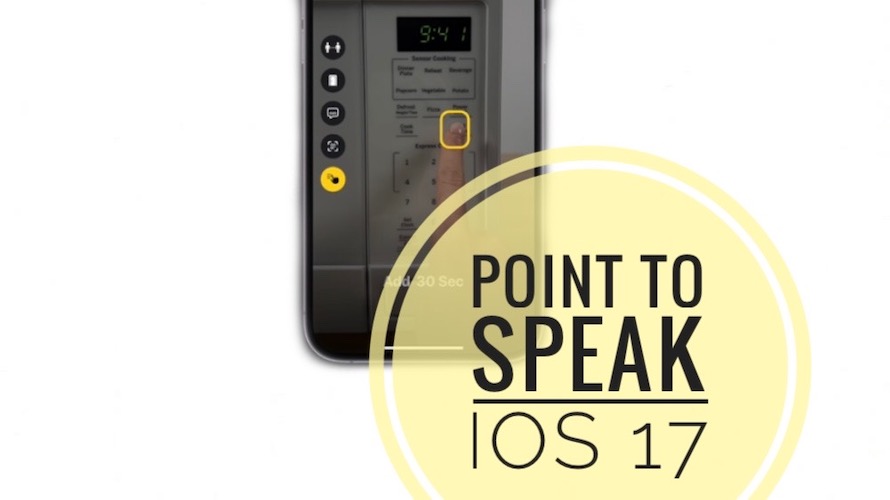

Detection Mode In Magnifier: Point And Speak iOS 17 Feature

Detection Mode in Magnifier brings another highly useful Accessibility feature for users that are visually impaired. Point to Speak in Magnifier allows users with low vision to read out labels on physical objects with the help of the iPhone’s camera!

Point And Speak Feature

After updating to iOS 17 your iPhone will be able to detect the labels that you point at and read out the text. This allows blind users to interact which household appliances such as a microwave.

Apple demonstrates how Point and Speak works in the following preview video:

Notice how the Magnifier app is able to detect to which label the finger is pointing to, scans the text and speaks out the label content for each key, allowing to user to properly set the microwave!

Fact: According to Apple, Point and Speak “combines input from the camera, the LiDAR Scanner, and on-device machine learning to announce the text”!

Point And Speak On iPad?

Yes, this new Accessibility feature is also available on iPad running iPadOS 17 or later, software which is scheduled to roll out this fall!

Important: Your Apple device requires a LiDAR scanner to be compatible with this new Detection Mode, as confirmed by Apple.

Point And Speak In Magnifier

This feature is built-in the native Magnifier app. It works hand-in-hand with VoiceOver and can be efficiently used with other similar features including People Detection, Door Detection and Image Descriptions!

Point And Speak Not Available?

If you installed iOS 17 beta or any other compatible version but this new Detection Mode feature isn’t available make sure that your device is compatible.

As mentioned above LiDAR scanner hardware is required! This means that you need an iPhone 12 Pro or newer and a 2020 iPad Pro or later!

Important: Only the Pro iPhone and iPad models have this depth sensor included!

The following languages are supported: English, Spanish, French, Italian, German, Portuguese, Chinese, Cantonese, Korean, Japanese, and Ukrainian.

Fact: This article will be updated thought the year as more info will become available! We expect an extended preview of these Detection Mode features during the WWDC 2024 keynote, scheduled for June 5.

What do you think about the new Point and Speak accessibility feature? Do you have any issues using it? Share your feedback in the comments!

Related: Apple has also previewed Live Speech and Personal Voice features for iOS 17, iPadOS 17 and macOS 14!